Describe Quality Issues in Images Taken by People Who Are Blind

Overview

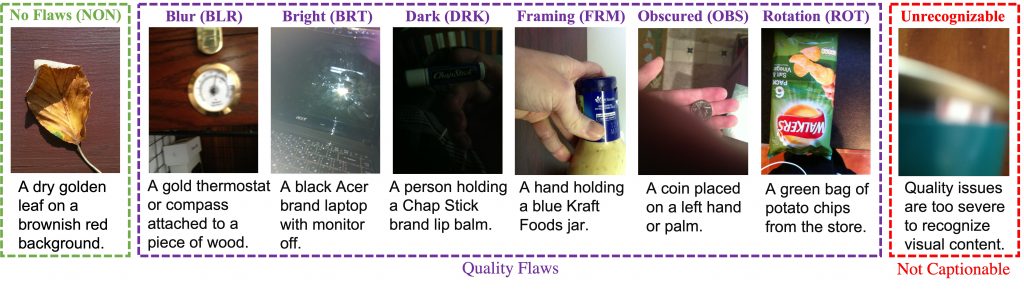

Motivated by the aim to tie the assessment of image quality to practical vision tasks, we introduce a new image quality assessment dataset that emerges from a real use case. Our dataset is built around 39,181 images that were taken by people who are blind who were authentically trying to learn about images they took using the VizWiz mobile phone application. Users submitted these images to overcome real visual challenges that they faced in their daily lives. Of these images, 17% were submitted to collect image captions from remote humans. The remaining 83% were submitted with a question to collect answers to their visual questions. For each image, we asked crowdworkers to either supply a caption describing it or clarify that the quality issues are too severe for them to be able to create a caption. We call this task the unrecognizability classification task. We also ask crowdworkers to label each image with perceived quality flaws: blur, overexposure (bright), underexposure (dark), improper framing, obstructions, and rotated views. We call this task the quality flaws classification task. Altogether, we call this dataset VizWiz-QualityIssues. Algorithms for these tasks can be of immediate use to blind photographers.

Dataset

The VizWiz-Image Quality Issues dataset includes:

- 23,431 training images

- 7,750 validation images

- 8,000 test images

- number of votes, out of five crowd workers, for quality flaws and unrecognizability for each image

The download files are organized as follows:

- Images: training, validation, and test sets

- Annotations:

- Challenge annotations (training and validation)

- Evaluation annotations (VizWiz_quality_issues_train_val_test.csv)

- API:

- Images are split into three JSON files: train, validation and test. Quality Issues are publicly shared for the train and validation splits and hidden for the test split.

- API is provided to demonstrate how to parse the JSON files and evaluate methods against the ground truth.

- Details about each image are in the following format:

'flaws': {'BLR': 5,

'BRT': 3,

'DRK': 0,

'FRM': 1,

'NON': 0,

'OBS': 0,

'OTH': 0,

'ROT': 0},

'image': 'VizWiz_train_00022585.jpg',

'unrecognizable': 4The VizWiz-VQA EvalAI evaluation servers are now deprecated and are no longer maintained. To support continued benchmarking, we publicly release the annotation JSON files for self evaluation.

Publications

The new dataset is described in the following publication:

Assessing Image Quality Issues for Real-World Problems

Tai-Yin Chiu, Yinan Zhao, and Danna Gurari. IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

Code

Code for the baseline models in the paper and evaluation: link

Contact Us

For questions, please send them to Tai-Yin Chiu at chiu.taiyin@utexas.edu.